In 2010, two Wellesley researchers did some clever Twitter-spam forensics in the wake of the Scott Brown-Martha Coakley Senate showdown in Massachusetts, arguing that open, real-time technologies like Twitter and Google real-time search results provide "disproportionate exposure to personal opinions, fabricated content, unverifi ed events, lies and misrepresentations that otherwise would not fi nd their way in the fi rst page, giving them the opportunity to spread virally."

Their paper inspired a team at Indiana University to create Truthy, named after the now-famous Stephen Colbert neologism, a machine-learning system that follows the creation and spread of memes and can locate the tell-tale signs of "astroturfing," i.e. pseudo-grassroots messaging and outreach. It locates and maps patterns of memes—which, true to the concept's origins in biology, appear in the great diversity of the simple but sophisticated organic structures that inspired the idea in the first place.

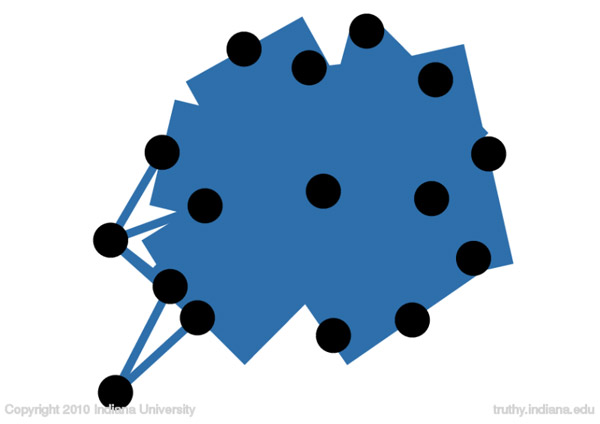

Here's a stupid, blunt Twitter spam campaign, for instance:

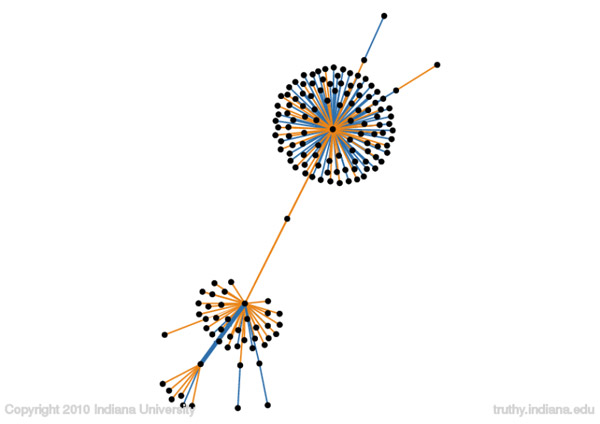

Blue lines represent retweets; as the authors explain, "a bunch of accounts collude by retweeting each other (as displayed by the thick blue edges) to promote a particular website." It's primitive and easily detected. By contrast, here's a portrait of polarization: a map of what happened when Lady Gaga got into it with John McCain on Twitter:

Famous people get re-tweeted a lot, but people also try to talk with them (replies are represented by the orange lines). The more legitimately grassroots a meme, the messier it looks, and less designed.

We're increasingly reliant on computers to behave in "natural" manners, acting as proxies for more expensive and unreliable technology like "friends" and "editors." Zite skims my reading and suggests stuff it "thinks" I have interest in; Netflix recommends movies based on my history, often with hilarious results (aesthetics are always the second language of computers). Truthy has a lot of promise for rooting out TOS violators and keeping the field level.